This blog post is part of a series on non functional requirements, and how the NFR take most of the effort.

The scenario

You want a third party to implement an application package to allow people to buy and sell widgets from their phone. Once the package has been developed, they will hand it over to you to sell, support, maintain and upgrade and you will be responsible for it,

At the back-end is a web server.

Requirements you have been given.

- We expect this application package to be used by all the major banks in the world.

- For the UK we expect the number of people who have an account to be about 10 million people

- We expect about 1 million trades a day.

See start here for additional topics.

What does supported levels mean?

Your code may use code from other sources. If you experience problems with this code you want to be able to get assistance in diagnosing problems, and getting them to create fixes to any problems, in a timely manner(within a week, rather than within a year).

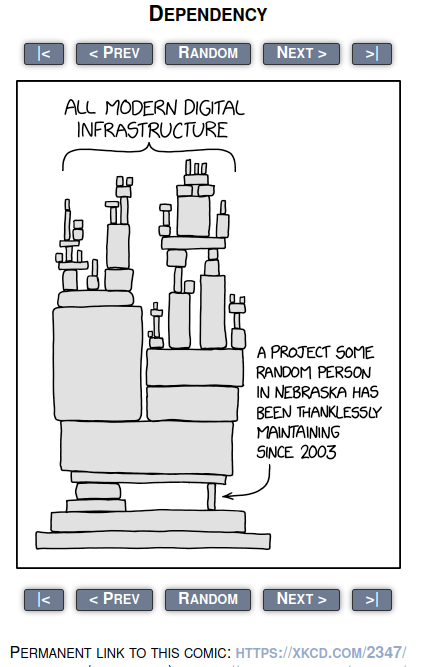

The following cartoon expresses this well.

You need to think about all code you use. You may use a package, which in turn uses an unsupported package. How are you going to know you are using this unsupported package, and how do you get support for it. This may affect your decision as to which packages you use. You may decide a perfect package should not be used, because it has a component which is not supported, and decide instead to use a not-so-good package which is supported.

Supported levels

The software used should be at supported levels. For example Java 7 went out of service many years ago. Java 21 was released in 2024.

If you are using unsupported code, and experience a problem, you may get no support, or have to pay a lot of money to get the support.

Over time you will need to create plans to upgrade the software used so that everyone has current software which is neither too new (let other people find the bugs) or too old (unsupported).

This will mean setting up test environments to test these new levels. You also need to plan on how you will get your customers to upgrade.

If you need to make code changes to support newer version, then you need to think about how you ship fixes to customers. You need to know which level the customer has, and send them the appropriate fix.

Document and test the requirements

You need to document what levels of packages are required, and what are supported. For example you may say that you support Java 18, 19, 20, and 21. You need to have these environments in your development, support and test systems, so you can recreate customer problems, and check that the supported environment still work.

This applies for remote databases where the database is not on same machine as your product.

Does your code need to checks it is running on supported levels. It can make problem diagnosis much easier if you know the customer is/is not running on supported levels of software.