if you are interested in getting MQ Statistics into a CSV format, then Bobbee Broderick, has extended the pymqi package with Program to produce CSV file of MQ Statistics. I haven’t used it, but I said I would advertise it.

Tag: MQ

Should all red flags be green?

This question came out of a discussion on an MQ forum, where the question was if MQ does one time delivery, how come he got the same message twice?

Different sorts of MQ gets.

There are a variety of patterns for getting a message.

- Destructive get out of sync-point. One application can get the message. It is removed from the system. As part of the MQGET logic there is a commit of the get so once it has gone it has gone. This is usually used for non persistent message. Persistent messages are usually processed within sync-point, but there are valid cases when the get of a persistent out of sync-point is valid.

- Destructive get within sync-point. One application can get the message. The queue manger holds a lock on the message which makes it invisible to other applications. When the commit is issued (either explicitly or implicitly) , the message is deleted. If the application rolls back (either implicitly of explicitly) the message becomes visible on the queue again, and the lock released.

- Browse. One or more applications can get the message when using the get-with-browse option. Sync-point does not come into the picture, because there are no changes to the message.

- One problem with get-with-browse is you can have many application instances browsing the queue, and they may do the same work on a message, wasting resources. To help with this, there is cooperative browse. This is effectively browse and hide. This allows a queue monitor application to browse the message, and start a transaction saying process “this” message. A second instance of the queue monitor will not see the message. If the message has not been got within a specified time interval the “hide” is removed, and so the message becomes visible. See Avoiding repeated delivery of browsed messages.

The customer’s question was, that as the get was destructive, how come the message was processed twice – could this be a bug in MQ?

The careful reader may have spotted why a message can be got twice.

Why the message was processed “twice”.

Consider an application which does the following

MQGET destructive, in sync-point

Write “processed message id …. ” to the log

Update DB2 record

Commit

You might the see following in the log

processed message id x’aabbccdd01′.

processed message id .x’aabbccdd02′.

processed message id x’eeffccdd17′. .

Expanding the transaction to give more details

MQGET destructive, in sync-point

Write “processed message id …. ” to the log

Update DB2 recordIf DB2 update worked then commit

else backout

If there was a DB2 problem, you could get the following on the log:

processed message id x’aabbccdd01′.

processed message id x’aabbccdd01′.

processed message id x’eeffccdd17′. .

You then say “Ah Ha – MQ delivered the message twice”. Which is true, but you should be saying “Ah Ha – MQ delivered the message but the application didn’t want it. The second time MQ delivered it, the application processed it”. Perhaps change the MQ phrase to “MQ does one time successful delivery“.

Why is this blog post called Should all red flags be green?

A proper programmer (compared to a coder), will treat a non successful transaction as a red flag, and take an action because it is an abnormal situation. For example write a message to an error log

- Transaction ABCD rolled back because “DB2 deadlock on ACCOUNTS table”

- Transaction ABCD rolled back because “MQ PUT to REPLYQUEUE failed – queue full”

- Transaction ABCD rolled back because “CICS is shutting down”

The Architects and systems programmers can look at these messages and take action.

For example with DB2, investigate the lock held duration. Can you reduce the time the lock is held, perhaps by rearranging with within a unit of work, for example “MQGET, MQPUT reply, DB2 update, commit” instead of “MQGET, DB2 update, MQPUT of reply, commit.

For MQ queue full, make the maximum queue depth bigger, or find out why the queue wasn’t being drained.

CICS shutting down.You may always get some reasons to rollback.

Once you have put an action plan in place to reduce the number of red flags, you can mark the action item complete, change its status from red to green and keep the project managers happy (who love green closed action items).

Note: This may be a never ending task!

After thought

In the online discussion, Morag pointed out that perhaps the same message was put twice. Which would show the same symptoms. This could have been due to a put out of syncpoint, and the transaction rolled back.

Upgrading to MQ 9.2.1 on Ubuntu using apt was easy, but got messy.

Installing MQ 9.2.1 over an earlier release of MQ was almost easy!

I started here and downloaded the developer edition into ~/MQ921.

-

- I had to cd ~/MQ921/MQSERVER to run the license.sh.

- I could not edit a file in /etc/apt/sources.list.d because I was not authorised, using sudo gedit failed with:

- Invalid MIT-MAGIC-COOKIE-1 keyUnable to init server: Could not connect: Connection refused. Gtk-WARNING **: 10:34:55.390: cannot open display: :0

- I could have used xhost +local: to fix this problem.

- I used the filename ibmmq921-install.list instead of the suggested ibmmq-install.list because I already had an ibmmq.list file in the directory.

- I created ibmmq921-install.list in my home directory and then used sudo mv ibmmq921-install.list /etc/apt/sources.list.d/ .

- Initially I forgot to do sudo apt update – so the install did not find the updated file.

- When I ran sudo apt update it displayed 10 packages can be upgraded. Run ‘apt list –upgradable’ to see them.

- I ran sudo apt update and it displayed

ibmmq-client/unknown 9.2.1.0 amd64 [upgradable from: 9.1.5.0] ibmmq-gskit/unknown 9.2.1.0 amd64 [upgradable from: 9.1.5.0] ibmmq-java/unknown 9.2.1.0 amd64 [upgradable from: 9.1.5.0] ibmmq-jre/unknown 9.2.1.0 amd64 [upgradable from: 9.1.5.0] ibmmq-man/unknown 9.2.1.0 amd64 [upgradable from: 9.1.5.0] ibmmq-runtime/unknown 9.2.1.0 amd64 [upgradable from: 9.1.5.0] ibmmq-samples/unknown 9.2.1.0 amd64 [upgradable from: 9.1.5.0] ibmmq-sdk/unknown 9.2.1.0 amd64 [upgradable from: 9.1.5.0] ibmmq-server/unknown 9.2.1.0 amd64 [upgradable from: 9.1.5.0] ibmmq-web/unknown 9.2.1.0 amd64 [upgradable from: 9.1.5.0]

-

- I ran sudo apt upgrade it ran for a short while and ended successfully.

- The command dspmqver showed Version 9.2.1.0 – so success.

- Starting the queue manager strmqm QMA gave IBM MQ queue manager ‘QMA’ started using V9.2.1.0

It got messy

After I had successfully installed MQ, the next time I ran sudo apt update it gave me lots of messages like

Get:1 file:/home/colinpaice/MQ921/MQServer ./ InRelease

Ign:1 file:/home/colinpaice/MQ921/MQServer ./ InRelease

Get:2 file:/home/colinpaice/MQ921/MQServer ./ Release

Ign:2 file:/home/colinpaice/MQ921/MQServer ./ Release

By adding in the file, means apt checks it every time. I could not see how any updates were going to appear in the files, so I removed the file from /etc/apt/sources.list.d/

I also deleted the files I downloaded (890 MB) and the unzipped directory (927MB). (I needed to use sudo rm -r MQ921 because the files have write-protection.

Not very tidy.

What should I monitor for MQ on z/OS – logging statistics

For the monitoring of MQ on z/OS, there are a couple of key metrics you need to keep an eye on for the logging component, as you sit watching the monitoring screen.

I’ll explain how MQ logging works, and then give some examples of what I think would be key metrics.

Quick overview of MQ logging

- MQ logging has a big(sequential) buffer for logging data, it wraps.

- Application does an MQPUT of a persistent message.

- The queue manager updates lots of values (eg queue depth, last put time) as well as move data into the queue manager address space. This data is written to log buffers. A 4KB page can hold data from many applications.

- An application does an MQCOMMIT. MQ takes the log buffers up and including the current buffer and writes it to the current active log data set. Meanwhile other applications can write to other log buffers.

- The I/O finishes and the log buffers just written can be reused.

- MQ can write up to 128 pages in an I/O. If there are more than 128 buffers to write there will be more than 1 I/O.

- If application 1 commits, the IO starts, and then application 2 commits. The I/O for the commit in application 2 has to wait for the first set of disk writes to finish, before the next write can occur.

- Eventually the active log data set fills up. MQ copies this active log to an archive data set. This archive can be on disk or tape. This archive data set may never be used again in normal operation. It may be needed for recovery of transactions or after a failure. The Active log which has just been copied can now be reused.

What is interesting?

Displaying how much data is logged per second.

Today XXXXXXXXXXXXXXXXXXXX

Last week XXXXXXXXXXXXXXXXXXXXXXXXXXXXX

Yesterday XXXXXXXXXX

0 100MB/Sec 200 MB/Sec

This shows that the logging rate today is lower than last week. This could be caused by

- Today is just quieter than last week

- There is a problem and there are fewer requests coming into MQ. This could be caused by problems in another component, or a problem in MQ. When using persistent messages the longest part of a transaction is the commit and waiting for the log disk I/O. If this I/O is slower it can affect the overall system throughput.

- You can get the MQ log IO response times from the MQ log data.

Displaying MQ log I/O response time

You can break down where time is spent in doing I/O into the following area

- Scheduling the I/O – getting the request into the I/O processor on the CPU

- Sending the request down to the Disk controller(eg 3990)

- Transferring data

- The I/O completes, and send an interrupt to z/OS, z/OS has to catch this interrupt and wake up the requester.

Plotting the I/O time does not give an entirely accurate picture, as the time to transfer the data depends on the amount of data to transfer. On a well run system there should be enough capacity so the other times are constant. (I was involved in a critical customer situation where the MQ logging performance “died” every Sunday morning. They did backups, which overloaded the I/O system).

In the MQ log statistics you can calculate the average I/O time. There are two sets of data for each log

- The number of requests, and sum of the times of the requests to write 1 page. This should be pretty constant, as the data is for when only one 4KB was transferred

- The number of requests, and sum of the times of the requests to more than 1 page. The average I/O time will depend on the amount of data transferred.

- When the system is lightly loaded, there will be many requests to write just one page.

- When big messages are being processed (over 4 KB) you will see multiple pages per I/O.

- If an application processes many messages before it commits you will get many pages per I/O. This is typical of a channel with a high achieved batch size.

- When the system is busy you may find that most of the I/O write more than one page, because many requests to write a small amount of data fills up more than one page.

I think displaying the average I/O times would be useful. I haven’t done tried this in a customer environment (as I dont have customer environment to use). So if the data looks like

Today XXXXXXXXXXXXXXXXXXXXXXXX Last week XXXXXXXXXXXXXXXXXXXXXXXXXXXXX

One hour ago XXXXXXXXXXXXXXXXXXX

time in ms 0 1 2 3

it gives you a picture of the I/O response time.

- The dark green is for I/O with just one page, the size of the bar should be constant.

- The light green is for I/O with more than one page, the size of the bar will change slightly with load. If it changes significantly then this indicates a problem somewhere.

Of course you could just display the total I/O response time = (total duration of I/Os) / (total number of I/Os), but you lose the extra information about the writing of 1 page.

Reading from logs

If an application using persistent messages decides to roll back:

- MQ reads the log buffers for the transaction’s data and undoes any changes.

- It may be the data is old and not in the log buffers, so the data is read from the active log data sets.

- It may be that the request is really old (for example half an hour or more), MQ reads from the archive logs (perhaps on tape).

Looking at the application doing a roll back, and having to read from the log.

- Reading from buffers is OK. A large number indicates application problem or a DB/2 deadlock type problem. You should investigate why there is so much rollback activity

- Reading from Active logs … . this should be infrequent. It usually indicates an application coding issue where the transaction took too long before commit. Perhaps due to a database deadlock, or bad application design (where there is a major delay before the commit)

- Reading from Archive logs… really bad news….. This should never happen.

Displaying reads from LOGS

Today XXXXXXXXXXXXXXXXXXXXXXXX Last week X One hour ago XXXXX rate 0 10 20 40

Where green is “read from buffer”, orange is “read from active log”, red is “read from Archive log. Today looks a bad day”.

What no one tells you about defining your RACF resources – and how to do it for MQ.

Introduction

The RACF documentation has a lot of excellent reference materials describing the syntax of the commands, but I could not find much useful information on how to set up RACF specifically for products like MQ, CICS, Liberty etc.

It is bit like saying programming has the following commands, load, store, branch; but fails to tell you that you can do wonderful things like draw Mandelbrot pictures using these instructions.

I set up MQ on my z/OS system as an enterprise user – though I have an enterprise with just one person in it – me! With this view it shows what you need to configure.

I am not a RACF expert – but have learned as I go along. I believe this blog post is accurate – but I may have missed some set up considerations.

In this blog post I’ll cover

- Security roles

- RACF concepts – class and profile

- Controlling who can create profiles and how to limit what they can create

- MQ profiles

- Planning for MQ

- How do you copy the security profiles for a new queue manager?

A typical enterprise – from a security perspective

In a typical enterprise there are different departments

- The security team responsible for the overall set up of security, ensuring that configurations are up to date (userids which are no longer needed are deleted).

- product teams are responsible for defining the security profiles within their products, protecting resources, and giving people access to facilities.

- most developers do not have the authority to define profiles or give access. Many developers do not have a z/OS logon.

What do you need to protect?

There are four types of resources you can define

- commands

- resources

- “logging on” or “connecting to”

- turning off security, or making powerful commands generally available

For example, for z/OS

- commands

- being able to issue z/OS commands

- being able to issue TSO commands

- resources

- data sets

- logging on

- which systems you can logon to

- turning off security

- changing the RACF configuration

for MQ

- commands

- being able to issue MQ commands to configure the queue manager

- being able to issue commands to define MQ queues etc

- resources

- queues, channels etc

- logging on

- which queue managers you can use, connect from batch, but not from CICS

- defining the switches to disable parts of MQ security checking.

How do you protect a resource?

Resources are defined in classes. For example

- class OPERCMDS define z/OS console commands

- class MQCMDS for MQ commands

- class MQQUEUE for MQ queues

- class SERVER for managing access to servers such as Liberty and WAS

You need to go down a level, and protect resources within a class. You may want to allow one group of people to define resources for production and another group allow to define resources for test. You may have MQOPS allowed to define profiles for PROD… and TEST…. and TESTOPS only allowed to define resources TEST…..

You need CLass AUTHorisation (CLAUTH) on a userid to be able to define a resource. The CLAUTH does not exist for a group.

ALTUSER ADCDA CLAUTH(MQCMDS)

With this command userid ADCDA can now use commands like

RDEFINE MQCMDS MQPA.DISPLAY.** UACC(NONE) OWNER(MQM)

This says

- Create an entry for class MQCMDS

- Queue manager MQPA, any DISPLAY command, so DISPLAY USAGE, and DISPLAY QLOCAL would be covered

- No universal access

- The resource is owner by MQM. If this is a group, anyone with group special in group MQM can issue the PERMIT command on the resource

How specific a profile do I need?

For harmless commands, such as DISPLAY you can have a general profile MQPA.DISPLAY.* to cover all DISPLAY commands.

For commands that can change the system, you should use specific profiles, for example

RDEFINE MQCMDS MQPA.DEFINE.PSID UACC(NONE) OWNER(MQM)

RDEFINE MQCMDS MQPA.DEFINE.QLOCAL UACC(NONE) OWNER(MQM)

PERMIT MQPA.DEFINE.PSID CLASS(MQCMDS) ACCESS(READ) ID(MQOP1)

PERMIT MQPA.DEFINE.QLOCAL CLASS(MQCMDS) ACCESS(READ) ID(MQAMD1)

If you use the DEFINE.** then administrators can give themselves access to the operator DEFINE commands.

Limiting what profiles a user can manage

If you have RACF GENERICOWNER enabled (this is a system wide option) you can create profiles and grant people access within that group.

Turn on GENERIC OWNER

SETROPTS GENERICOWNER

Create a top level, catch-all case

RDEFINE MQCMDS ** UACC(NONE) OWNER(SYS1)

Create a profile limiting people in group ADCD to define resources with names MQPC.**

RDEFINE MQCMDS MQPC.** UACC(NONE) OWNER(ADCD)

If userid ADCDA in group ADCD tries to create a profile

RDEFINE MQCMDS MQPC.AA3 UACC(NONE)

it works, but

RDEFINE MQCMDS MQPZ.AA UACC(NONE)

gives ICH10103I NOT AUTHORIZED TO DEFINE MQPZ.AA.

The owner of a profile can give authority to anyone, there are no limits or checks.

Creating profiles for MQ

Using the categories described above

- MQ commands

- being able to issue MQ commands to configure the queue manager

- being able to issue commands to define MQ queues etc

- MQ resources

- queues, channels etc

- connecting to MQ

- which queue managers you can use

- defining the switches to disable parts of MQ security checking.

MQ Commands

Commands can be issued from

- the operator console (SDSF)

- with the MQ ISPF panels, messages are put to the SYSTEM.COMMAND.INPUT.QUEUE

- Applications putting messages to the SYSTEM.COMMAND.INPUT.QUEUE

- Applications using PCF to the SYSTEM.COMMAND.INPUT.QUEUE

If command checking is enabled then command are checked using the MQCMDS class.

Other commands, via the SYSTEM.COMMAND.INPUT.QUEUE, need to have permission to put to the queue, and the command is checked by the MQCMDS class.

MQResources

The queuing resources are have the following classes – MX… are for MiXed case names. A completely UPPER case queue name can still be protected if you choose to use the MXQUEUE class. That is “upper case” names are a subset of the “mixed case” names, and MYQUEUE is different from MyQueue.

- MQQUEUE,MXQUEUE queue resources

- MQPROC, MXPROC process (for example triggering)

- MQNLIST, MXNLIST name list

- MXTOPIC topics – Topics are always mixed case.

Connecting to MQ

- MQCONN and you define resources like MQPA.BATCH CLASS(MQCONN)

Defining switches to disable parts of MQ security checking, and subset checks

- MQADMIN, MXADMIN, Profiles:

Used mainly for holding profiles for administration-type functions. For example:

-

- Profiles for IBM MQ security switches

- The RESLEVEL security profile

- Profiles for alternate user security

- The context security profile

- Profiles for command resource security

For example the following turns off all RACF checking for the queue manager

REFINE MQADMIN MQPA.NO.SUBSYS.SECURITY

You can set up security so people are authorised to only a subset of objects.

You can set up

RDEFINE MQADMIN MQPA.QUEUE.TEST* OWNER(MQPAOPS)

to allow people access to a subset of queues – in this case queues beginning with TEST on queue manager MQPA. A user would need to be authorised to use RDEFINE MQCMDS MQPA.DEFINE.QLOCAL or (hlq.DEFINE.**) and authorised to RDEFINE MQADMIN MQPA.QUEUE.TEST*.

A thought on the MQ profile design.

It feels like the security was not well defined in this area. You want to allow someone to restrict someone’s access to only use a subset of queues, but the person may have the authority to turn MQ security off by giving them authority to create MQADMIN MQPA.NO.SUBSYS.SECURITY!

You can solve this using GENERICOWNER (which is optional) and

RDEFINE MQADMIN MQPA.NO.** UACC(NONE) OWNER(THEBOSS)

Looking back, rather than depending on the GENERICOWNER facility, I would have set up a class MQSWITCH to allow only the site RACF coordinator to define a switch and so turn off security.

Planning for security

You need to identify

- the classes of profiles ( MQCMDS, MQQUEUES, z/OS OPERCMDS)

- the subsystems being protected ( MQ, DB2)

- the areas of profiles, TEST queues, Production tables for PAYROLL application

- the roles of people and what they are expected to do – map each role to a group

- For each subsystem and class of profile what can each role do?

- Production, Read Only operator commands, roles: all roles

- Production, DEFINE PAGESET commands, roles: members of ZOPER group

- Production, DEFINE QUEUE commands, roles: members of PRODADMN group

- Test, DEFINE PAGESET commands, roles: members of ZOPER and TESTOPER groups

- For each subsystem and class of profile what can each role do?

- The hierarchy of groups. If you have defined a profile with owner TESTOPER, people can create resources in this group

- if they are in the TESTOPER group,

- or a user who has group-SPECIAL authority over the group which owns the TESTOPER profile

- Define the profiles, the general MQPA.DISPLAY.**, and the specific MQPA.DEFINE.PAGESET, MQPA.DEFINE.QLOCAL

Another thought of MQ security design.

At the beginning of MQ 25+ years ago, this was before Sysplex, there was only a single LPAR, and typically only one queue manager, DB2 etc on each LPAR. These days people have many “identical” queue managers – which may be in a QSG or not.

When you create a new queue manager you have to replicate the security profiles, so copy all the profiles from MQPA…. to MQPB….

With hindsight it may have been better to

- define profiles with a generic name prefix, eg MQHLQ, so you would have MQHLQ.DEFINE.**

- have a queue manager option SECPFX=MQHLQ which points to these profiles

- have a class SERVER profile MQ.MQHLQ and grant the queue manager userid access to it.

How do you copy the security profiles for a new queue manager?

I could find no easy way of doing this. When I worked for IBM I had some rexx code which used the IRRXUTIL to extract information from the RACF database and rebuild the RDEFINE and PERMIT statements.

You could also use the RACF Unload Database program into a file, but most people are not likely to have access to the this.

What no one tells you about setting up your RACF groups – and how to do it for MQ.

Introduction

The RACF documentation has a lot of excellent reference materials describing the syntax of the commands, but I could not find much useful information on how to set up RACF specifically for products like MQ, CICS, Liberty etc.

It is bit like saying programming has the following commands, load, store, branch; but fails to tell you that you can do wonderful things like draw Mandelbrot pictures using these instructions.

You need to plan your group structure before you try to implement security, as it is hard to change once it is in place.

The big picture

You can set up a hierarchy of groups so the site RACF person can set up a group called MQ, and give the MQ team manager authority to this group.

The manager can

- define groups within it

- connect users to the group

- give other people authority to manage the group.

We can set up the following group structure

- MQM

- MQOPS – for the MQ operators

- MQOPSR for operators who are allow to issue only Read (display) commands

- MQOPSW for operators who can issue all command, display and update

- MQADMS – for the MQ administrators

- MQADMR – for MQ administrators who can only use display commands

- MQADMW – for MQ administrators who can use all commands

- MQWEB….

- MQOPS – for the MQ operators

You should place an operator’s userid in only one group MQOPSR or MQOPSW as these are used to control access. MQM, MQOPS, MQADMS, MQWEB are just used for administration.

You permit groups MQOPSR and MQOPSW to issue a display command, but only permit group MQOPSW to issue the SET command.

Setting up groups to make it easy to administer

A group needs an owner which administers the group. The owner can be a userid or a group.

A group has been set up called MQM, and my manager has been made the owner of it.

My manager has connected my userid PAICE to the MQM group with group special.

CONNECT PAICE GROUP(MQM) SPECIAL

I can define a new group MQOPS for example

ADDGROUP MQOPS SUPGROUP(MQM) OWNER(MQM) DATA(‘MQ operators’)

The SUPGROUP says it is part of the hierarchy under MQM. I can create the group under MQM because I am authorised, If I try to create a group with SUPGROUP(SYS1) this will fail because I am not authorised to SYS1.

The OWNER(MQM) says people in the group MQM with group special can administer this new group.

Because my userid (PAICE) has group special for MQM, I can now connect users to the new group, for example

CONNECT ADCDB GROUP(MQMD ) AUTHORITY(USE ).

I can create another group under MQMD called MQMX, and connect a userid to it.

ADDGROUP MQMX SUPGROUP(MQMD) OWNER(MQMD) DATA(‘MQ Bottom group’)

CONNECT ADCDE GROUP(MQMX ) AUTHORITY(USE )

My userid PAICE can administer this because of the OWNER() inheritance up to GROUP(MQM)

If I list the groups I get

LISTGRP MQM

INFORMATION FOR GROUP MQM

SUPERIOR GROUP=SYS1 OWNER=IBMUSER

SUBGROUP(S)= MQM2 MQMD

USER(S)= ACCESS= ACCESS COUNT= UNIVERSAL ACCESS=

PAICE JOIN 000000 NONE

CONNECT ATTRIBUTES=SPECIAL

LISTGRP MQMD

INFORMATION FOR GROUP MQMD

SUPERIOR GROUP=MQM OWNER=MQM

SUBGROUP(S)= MQMX

USER(S)= ACCESS= ACCESS COUNT= UNIVERSAL ACCESS=

ADCDB USE 000000 NONE CONNECT ATTRIBUTES=NONE

LISTGRP MQMX

INFORMATION FOR GROUP MQMX

SUPERIOR GROUP=MQMD OWNER=MQMD

NO SUBGROUPS

USER(S)= ACCESS= ACCESS COUNT= UNIVERSAL ACCESS=

ADCDE USE 000000 NONE

CONNECT ATTRIBUTES=NONE

All SUPGROUP() does is to define the hierarchy as we can see from the LISTGRP. We can display the groups and draw up a picture of the hierarchy. You can use the LISTGRP command repeatedly, or use the DSMON program(EXEC PGM=ICHDSM00) and use option

USEROPT RACGRP to get a picture like

LEVEL GROUP 1 SYS1 (IBMUSER ) 2 | MQM (IBMUSER ) 3 | | MQMD 4 | | | MQMX 3 | | MQM2 (IBMUSER )

Using OWNER(group) instead of OWNER(userid)

- If you have OWNER(groupname) it is easy to administer the groups. When someone joins or leaves the department, you add or remove the userid from groupname. One change.

- If you have OWNER(userid), then you have to explicitly connect the userid to each group with group special. When there is a new person you have to add the userid to each group individually. When someone leaves the team you have to remove the persons userid from all of the groups. This could be a lot of work.

Delegation.

You could define an operator MQOP1 and give the userid group-special for group MQOPS. This userid (MQOP1) can be used to add or remove userids in the MQOPSR and MQOPSW groups.

Looking at the MQOPS groups we could have groups and connected userids

- MQM with MQ security userids PAICE, BOB having group-special

- MQOPS with the operations manager and deputy MQOP1, MQOP2 having group special

- MQOPSR with STUDENT1, STUDENT2 who are only allowed to issue display commands

- MQOPSW with PAICE, TONYH, CHARLIE

- MQADMS….

- MQOPS with the operations manager and deputy MQOP1, MQOP2 having group special

and similarly for the MQ administration eam.

Userid PAICE can connect userids to all groups. MOP1 can only connect userids to the MQOPSR and MQOPSW, and not connect to the MQ ADMIN groups.

You use groups MQOPSR and MQOPSW for accessing resources. Groups MQM and MQOPS have no authority to access a resource, they are just to make the administration easier.

You may also want to consider having a group for application development. The group called PAYRDEVT is under MQM, is owned by the manager of the payroll development team.

When the annual userid validation check is done, the development manager does the checks, and tells the security department it has been done.

Permissions

There is no inheritance of permissions. If a userid needs functions available to groups MQMD and MQMX, the user needs to be connected to both groups.

You only connect userids to groups, you cannot have groups within groups. There may be many groups of userids which are allowed to issue an MQ display command, but only one group who can issue the SET command.

Suggested MQ groups

You need to consider

- production and test environments

- resources shared by queue managers, queue managers with the same configurations in a sysplex which can share definitions

- queue managers as part of a Queue Sharing Group

- queue manages that need isolation and so may have common operations groups, but different administration and programming groups.

You might define

- Group MQPA for the queue manager super group. (MQ, Production system, A)

- Groups for MQWEB. The Web server roles are described here.

- Groups for controlling MQ, operations and administrations, read only or update

- Groups for who can connect via batch, CICS etc

- Groups for application usage, who can use which queues

Groups for MQWEB

For MQWEB the MQ documentation describes 4 roles: MQWebAdmin, MQWebAdminRO, MQWebUser, MFTWebAdmin; and there is console and REST access.

Each role should have its own group. The requests from “Admin” and “Read Only” run with the userid of the MQWEB started task. The request from “User” run with the signed on user’s authority.

You might set up groups

- MQPAWCO MQPA – MQWebAdminRO Console Read Only.

- MQPAWCU MQPA – MQWebUser Console User only. The request operates under the signed on userid authority.

- MQPAWCA MQPA – MQWebAdmin Console Admin.

- MQPAWRO MQPA – MQWebAdminRO REST Read Only.

- MQPAWRU MQPA – MQWebUser REST User only. The request operates under the signed on userid authority.

- MQPAWRA MQPA – MQWebAdmin REST Admin Only.

- MQPAWFA MQPA – MFTWebAdmin MFT REST Admin.

- MQPAWFO MQPA- MFTWebAdmin MFT REST Read Only.

I would expect most people to be in

- MQPAWCU MQPA – MQWebUser Console User only. The request operates under the signed on userid authority.

- MQPAWRU MQPA – MQWebUser REST User only. The request operates under the signed on userid authority.

so you can control who does what, and get reports on any violations etc. If people use the MQWEB ADMIN you do not know who tried to issue a command.

Groups for operations

The operations team may be managing multiple queue managers, so you may need groups

- PMQOPS for Production

- PMQOPSR

- PMQOPSW

- TMQOPS for Test

- PMQOPSR

- PMQOPSW

If some operators are permitted to manage only a subset of the queue managers you will need a group structure that can handle this, so have a special group XMQOPS for this.

- XMQOPS for the special queue manager

- XMQOPSR

- XMQOPSW

Groups for administration.

This will be similar to operations.

Groups for end users.

This is for people running work using MQ.

Usually there are checks to make sure a userid can connect to the queue manager, using the MQCONN resource. Some customers have a loose security set up, and rely on the CICS to check to see if the userid is allowed to use a CICS transaction, rather than if the userid is allowed to access a queue.

No, No, think before you create a naming convention

I remember doing a review of a large customer who had grown by mergers and acquisitions. We were discussing naming conventions, and did they have them.

“Naming conventions”, he said “we love them. We have hundreds of them around the place”. He said it was to hard and disruptive to try to get it down to a small number of naming conventions.

I saw someone’s MQ configuration and wished they had thought through their naming convention, or asked someone with more experience. This is what I saw

- The MQ libraries were called CSQ910.SCSQAUTH

- This is OK as it tells you what level of MQ you are using

- It would be good to have a dataset alias of CSQ pointing to CSQ910. Without this you have to change the JCL for all job, compiles, runs etc which had CSQ910. When you moved from CSQ810 to CSQ900 you have change the JCL. If you then decide to go back to CSQ810 for a week, you have to change the JCL again. With the alias is is easy – change the alias and the JCL does not need to change. Change the alias again – and the JCL does not need to change.

- The MQ logs were called CSQ710.QM.LOGCOPY1.DS01, … DS02,…DS03

- This shows the classic problem of having the queue manager release as part of the object names. It would have been better to have names like CSQ.QM.LOGCOPY1.DS01 without the MQ version in it.

- The name does include a queue manager name of sorts, but a queue manager name of QM is not very good. If you need another queue manager you will have names like QM, QMA, QMB so an inconsistent name.

- It is good to have the queue manager name as part of the data set name, so if the queue manager was QM01 then have CSQ.QM01.

- The page sets were CSQ710.QM.PAGESET0, CSQ710.QM.PAGESET1, CSQ710.QM.PAGESET2, CSQ710.QM.PAGESET3, CSQ810.QM.PAGESET4, CSQ910.QM.PAGESET5

- This shows the naming standard problem as it evolved over time. They added more page sets, and used the MQ release as the High Level Qualifier. The page sets are CSQ710,… CSQ810…, CSQ910… – following the naming standard.

You do not invent a naming convention in isolation, you need to put an architect’s hat on and see the bigger picture, where you have production and test queue managers, different versions of MQ, and see MQ is just a small part of the z/OS infrastructure.

- People often have one queue manager per LPAR, and call MQ after the LPAR.

- You are likely to have multiple machines – for example to provide availability, so plan for multiple queue managers.

- You may want different HLQ to be able to identify production queue manager data sets and test queue manager data sets..

- The security team will need to set up profiles for queue managers. Having MQPROD and MQTEST as a HLQ may make it easier to set up.

- The storage team (what I used to call data managers) set up SMS with rules for data set placement. For example production pagesets with a name like MQPROD.**.PSID* go on the newest, fastest, mirrored disks. MQTEST.** go on older disks.

- As part of the SMS definitions, the storage team define how often, and when, to backup data sets. A production page set may be backed up using flash copy once an hour. (This is within the Storage subsystem and takes seconds. It takes a copy by taking a copy of the pointers to the records on disk). Non production get backed up overnight.

Lessons learned

- For the IBM provided libraries, include the VRM in the data set names.

- Define an alias pointing to the current libraries so applications do not need to change JCL. You could have a Unix Services alias for the files in the zFS.

- Do not put the MQ release in the queue manager data sets names.

- Use queue manager names that are relevant and scale.

- Talk to your security and storage managers about the naming conventions; what you want protected, and how you want your queue manager data sets to be managed.

NETTIME does not just mean net time

I saw a post which mentioned NETTIME and where people assume it is the network time. It is more subtle than that.

If NETTIME is small then dont worry. If NETTIME is large it can mean

- Slow network times

- Problems processing messages at the remote end

Consider a well behaved channel where there are 2 messages on the transmission queue

| Sender end | Receiver end |

|

|

In this case the NETTIME is the total time less the time at the receiver end. So NETTIME is the network time.

In round numbers

- it takes 2 millisecond from sending the data to it being received

- get + send takes 0 ms ( the duration of these calls is measured in microseconds)

- receive (when there is data) + MQPUT and put works, takes 0 ms

- commit takes 10 ms

- it takes 1 ms between sending the response and it arriving.

- “10 ms” is sent in the response message

This is a simplified picture with details omitted.

The sender channel sees 13 ms between the last send and getting the response. (13 ms – 10 m)s is 3 ms – the time spent in the network.

Now introduce a queue full situation at the receiver end

| Sender end | Receiver end |

|

|

In round numbers

- it takes 2 millisecond from sending the data to it being received

- get + send takes 0 ms ( it is in microseconds)

- receive (when there is data) takes 0 ms

- the pause and retry took 500 ms

- the second receive and MQPUT takes 0 ms

- commit takes 10 ms

- it takes 1 ms between sending the response and it arriving.

- “10 ms” is sent ( as before) in the response message (the time between the channel code seeing the “end of batch” flag and the end of its processing

- Buffer 3 with the “end of batch” flag was sitting in the TCP buffers for 500 ms

The sender channel sees 513 ms between the last send and getting the response. 513 ms – 10 ms is 503 ms – and reports this as ” the time spent in the network” when in fact the network time was 3 ms, and 500 ms was spent wait to put the message.

Regardless of the root cause of the problem, a large nettime should be investigated:

- do a TCP ping to do a quick check of the network

- check the error logs at the receiver end

- check events etc to see if the queues are filling up at the receiver end

Using Activity Trace to show a picture of which queues and queue managers your application used.

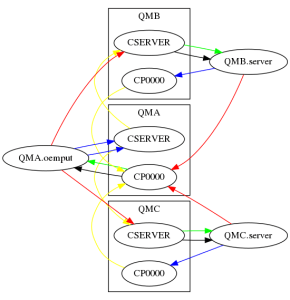

I used the midrange MQ activity trace to show what my simple application, putting a request to a cluster server queue and getting the reply, was doing. As a proof of concept (200 lines of Python), I produced the following

This output is a .png format. You can create it as an HTML image, and have the nodes and links as clickable html links.

Ive ignored any SYSTEM.* queues, so the SYSTEM.CLUSTER.TRANSMIT.QUEUE does not appear.

The red arrows show the “high level” flow between queue managers at the “architectural”, hand waving level.

- The application oemput on QMA did a put to a clustered queue CSERVER, there is an instance of the queue on QMB and QMC. There is a red line from QMA.oemput to the queue CSERVER on QMB and QMC

- The server programs, server running on QMB and QMC put the reply message to queue CP0000 on queue manager A

The blue arrows show puts to the application specified queue name – even though this may map to the S.C.T.Q. There are two blue lines from QMA.oemput because one message went to QMC.CSERVER, and another went to QMB.CSERVER

The yellow lines show the path the message took between queue managers. The message was put by QMA.oemput to queue CSERVER; under the covers this was put to the SCTQ. From the accounting trace record this shows the remote queue manager and queue name: the the yellow line links them.

The black line is getting from the local queue

The green line is the get from the underlying queue. So if I had a reply queue called CP0000, with a QAlias of QAREPLY. If the application does a get from QAREPLY, There would be a black line to CP0000, and a green line to QAREPLY

How did I get this?

I used the midrange activity trace.

On QMA I had in mqat.ini

applicationTrace: ApplClass=USER # Application type ApplName=oemp* # Application name (may be wildcarded) Trace=ON # Activity trace switch for application ActivityInterval=30 # Time interval between trace messages ActivityCount=10 # Number of operations between trace msgs TraceLevel=MEDIUM # Amount of data traced for each operation TraceMessageData=0 # Amount of message data traced

I turned on the activity trace using the runmqsc command

ALTER QMGR ACTVTRC(ON)

I ran some work load, and turned the trace off few seconds later.

I processed the trace data into a json file using

/opt/mqm/samp/bin/amqsevt -m QMA -q SYSTEM.ADMIN.TRACE.ACTIVITY.QUEUE -w 1 -o json > aa.json

I captured the trace on QMB, then on QMC, so I had three files aa.json, bb.json, cc.json. Although I captured these at different times, I could have collected them all at the same time.

jq is a “sed” like processor for processing json data. I used it to process these json files and produce one output file which the Python json support can handle.

jq . --slurp aa.json bb.json cc.json > all.json

The two small python files are zipped here. AT.

I used ATJson.py python script to process the all.json file and extract out key data in the following format:

server,COLIN,127.0.0.1,QMC,Put1,CP0000,SYSTEM.CLUSTER.TRANSMIT.QUEUE,QMC,CP0000,QMA, 400

- server, the name of the application program

- COLIN, the channel name, or “Local”

- 127.0.0.1, the IP address, or “Local”

- QMC, on this queue manager

- Put1, the verb

- CP0000, the name of the object used by the application

- SYSTEM.CLUSTER.TRANSMIT.QUEUE, the queue actually used, under the covers

- QMC, which queue manager is the SCTQ on

- CP0000, the destination (remote) queue name

- QMA, the destination queue manager

- 400 the number of times this was used, so 400 puts to this queue.

I had another python program Process.py which took this table and used python graphviz to draw the graph of the contents. This produces a file with DOT (graph descriptor language)parameters, and used one of the many programs to draw the chart.

This shows you what can be done, it is not a turn-key solution, but I am willing to spend a bit of time making it easier to use, so you can automate it. If so please send me your Activity Trace data, and I’ll see what I can do.

When is mid-range accounting information produced?

I was using the mid-range accounting information to draw graphs of usage, and I found I was missing some data.

There is a “Collect Accounting” Time for every queue every ACCTINT seconds (default 1800 seconds = 30 minutes). After this time, any MQ activity will cause the accounting record to be produced. This does not mean you get records every half hour as you do on z/OS, it means you get records with a minimum interval of 30 minutes for long running tasks.

Setup

I had a server which got from its input queue and put a reply message to the reply-to-queue.

Every minutes an application started once a minute which put messages to this server, got the replies and ended.

When are the records produced?

Accounting data is produced (if collecting is enabled) when:

- an MQDISC is issued, either explicitly implicitly

- for long running tasks the accounting record(s) seems to be produced at when the current time is past the “Collect Accounting time”, when there has been some MQ activity. For example there were accounting records for a server at the following times

- The queue manager was started at 12:35:51, and the server started soon afterwards

- 12:36:04 to 13:06:33. An application put a message to the server queue and got the response back. This is 27 seconds after the half hour

- 13:06:33 to 13:36:42 The application had been putting messages to the server and getting the responses back. This is 6 seconds after the half hour

- 13:36:42 to 14:29:48 this interval is 57 minutes. The server did no work from 1400 to 14:29:48 ( as I was having my lunch). At 14:29:48 a message arrived, and the accounting record was written for the server.

- 14:29:48 to 15:00:27 during this time messages were being processed, the interval is just over the 30 minutes.

What does this mean?

- If you want accounting data with an interval “on the half hour”, you need to start your queue manager “just before the half hour”.

- Data may not be in the time period you expect. If you have accounting record produced at 1645, the data collected between 1645 and 17:14 may not appear until the first message is processed the next day. The record havean interval from 16:45 to 09:00:01 the next day. You may not want to display averages if the interval is longer than 45 minutes.

- You may want to stop and restart the servers every night to have the accounting data in the correct day.