It is good practice to validate certificates when security is important. There are typically two ways of doing this.

- Have a central server with a list of all revoked certificates. This server has a Certificate Revocation List(CRL) which has to be maintained. This solution tends to be deprecated

- When a certificate is created add an extension with “check using this URL… to check if the certificate is valid”. This field is added when the certificate is signed by the Certificate Authority. A request is sent to the OCSP server, and a response sent back. This technique is known as Online Certificate Status Protocol (OCSP). You need an OCSP server for each certificate authority.

The blog post explains about OCSP and some of the challenges in using it.

When you create a signed certificate, the CA signs the certificate.

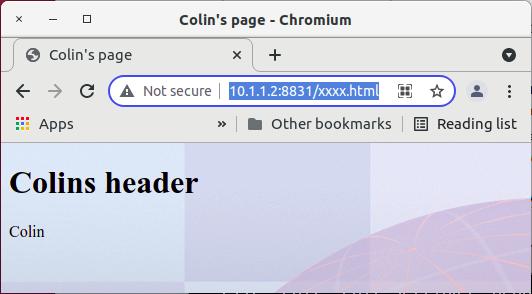

For example

openssl req -config eccert.config … -out $name.csr

openssl ca -config openssl-ca-user.cnf -policy signing_policy -in $name.csr -extensions xxxx

In the config file are different sections, in my xxx section is

[ xxxx ]

authorityInfoAccess = OCSP;URI:http://10.1.0.2:2000

If the server (LDAP in this case) is configured for OCSP, then when it sees the AIA extension, it sends a request to the OCSP server at the URI (10.1.0.2:2000) which responds saying “good” , “revoked” or “unknown”.

OCSP server.

Openssl provides a simple ocsp server. You give it

- the port it is to use

- the CA public certificate (so the incoming certificate can be validated against the CA)

- a file for that CA of the certificates it has issued, and it they are valid or not.

You can also configure the LDAP server to give a default URI, for those certificates that do not have the Authority Info Access(AIA) section. Typically this only works if you have one Certificate Authority, or you have a smart OCSP server which can handle the data for multiple CAs.

For example if your openssl OSCP server is configured for CA with DN:CN=CA1,o=MYORG, and you send down a request for DN:CN=CA2,o=myorg, it will not recognize it.

If you use the AIA extension, then you can have two different OCSP servers, one for each CA.

How does it work?

There are two ways of doing OCSP checking.

- The client looks at the certificate and sees there is an OCSP extension. The client sends a request to the OCSP server to check the certificate is valid.

- As part of the TLS handshake send the extension to the server to say “please do OCSP checking for me”. The server issues the request to the OCSP server, and can cache the response in the server. The server also sends the OCSP status to the client as part of the TLS handshake. The next request for the certificate can use the cached response. It tends to improve performance, as it reduces the number of requests to the OCSP server, and the responses are cached in the server. This is known as OCSP stapling.

Yes and no. I’ll cover this after “caching the response”

Caching the responses

The OCSP server may be within your organization, or it may be be external. The time to send a request and get the response back may range from milliseconds to seconds.

Servers can typically cache the response to an OCSP query.

The OCSP may update it’s certificate list every hour, or perhaps every day, so if it is refreshed once a day, your LDAP server, does not need to refresh its data more than once a day.

The openssl OCSP server has an option -nmin minutes| -ndays days which is how often to reread the file. It sends a response

SingleResponse

certID

hashAlgorithm (SHA-1)

issuerNameHash: 157b5dd0bcdee5b5428e063cf29a1f4e45be7499

issuerKeyHash: 5830af55c7b4d49fc9e3fac91441ef1fd7b215e3

serialNumber: 587

certStatus: good (0)

thisUpdate: 2021-11-05 10:42:58 (UTC)

nextUpdate: 2021-11-05 10:43:58 (UTC)

From this the requester knows when the cached value is no longer valid, and needs to contact the OCSP server again for that certificate. From this we can see that the response is valid for 60 seconds.

When the certificate was revoked the output was

Online Certificate Status Protocol

responseStatus: successful (0)

responseBytes

ResponseType Id: 1.3.6.1.5.5.7.48.1.1 (id-pkix-ocsp-basic)

BasicOCSPResponse

tbsResponseData

responderID: byName (1)

byName: 0...

producedAt: 2021-11-06 15:15:58 (UTC)

responses: 1 item

SingleResponse

certID...

certStatus: revoked (1)

revoked

revocationTime: 2021-11-06 15:12:30 (UTC)

revocationReason: cessationOfOperation (5)

thisUpdate: 2021-11-06 15:15:58 (UTC)

nextUpdate: 2021-11-06 15:16:58 (UTC)

responseExtensions: 1 item

Extension

Id: 1.3.6.1.5.5.7.48.1.2 (id-pkix-ocsp-nonce)

ReOcspNonce: a7e59577b7d2b3a2

signatureAlgorithm (ecdsa-with-SHA256)

Padding: 0

signature: 3046022100fb264d4c5dbbbf45cce752a8263c4f01631441...

certs: 1 item...

This information is available to the client.

If you write your application properly the impact of OCSP can be minimized.

For example consider the scenario where you are using REST requests, and go to one of a number of server.

- Your application starts

- It needs to check the validity of the certificate. Send a request to the OCSP server. This could take a long time (seconds). Some applications have a time-out of 15 seconds waiting for a response.

- The response comes back saying “certificate Good – and valid for 1 hour.

- Send a request to the server, and get the response back

- Issue another request (with another TLS handshake)

- After 1 hour – resend the request to the OCSP server to re-validate.

- Etc

OCSP stapling

OCSP stapling is very common now. Before this, clients themselves used to check the validity of the user’s certificate by contacting the OCSP server. With OCSP stapling, a request is put into the TLS handshake which says “please do the OCSP checks for me and send me the output”. This allows the server to cache the information.

Think of the old days of checking in at the airport, when the person checking you in, would staple “checked-in by agent 46” to your paper ticket.

The client requests this by adding the “status_request” extension to the TLS clientHello handshake.

The server sends down, as part of the “serverHello” the information it received from the OCSP server.

Note. ldapsearch, from openssl, sends up the status_request, but does not handle the response, I get

ldap_sasl_interactive_bind_s: Can’t contact LDAP server (-1) additional info: (unknown error code)

openssl s_client does not send the status_request extension, so does not participate in the OCSP checking.

Java does support this, and a Java application can get the OCSP response message using the getStatusResponses method on the from the ExtendedSSLSession. I believe you can decode it using Bouncycastle.

Setting up LDAP on z/OS to support OCSP

I added the following to a working LDAP system

Environment

GSK_OCSP_URL=http://10.1.0.2:2000

GSK_OCSP_ENABLE=ON

GSK_OCSP_CLIENT_CACHE_SIZE=100

GSK_REVOCATION_SECURITY_LEVEL=MEDIUM

GSK_SERVER_OCSP_STAPLING=OFF

GSK_OCSP_RESPONSE_SIGALG_PAIRS=0603060105030501040304020401

#GSK_OCSP_RESPONSE_SIGALG_PAIRS=0806080508040603060105030501040304020401

GSK_OCSP_NONCE_GENERATION_ENABLE=ON

GSK_OCSP_NONCE_CHECK_ENABLE=ON

GSK_OCSP_NONCE_SIZE=8

Stapling

I set GSK_SERVER_OCSP_STAPLING=OFF because ldapsearch on Ubunutu did not work with the ENDENTITY value.

Signature Algorithms

If GSK_OCSP_RESPONSE_SIGALG_PAIRS included any of 0806 0805 0804, I got messages

GLD1160E Unable to initialize the LDAP client SSL support: Error 113, Reason -99.

GLD1063E Unable to initialize the SSL environment: 466 – Signature algorithm pair is not valid.

In the trace I had 03353003 Cryptographic algorithm is not supported, despite these being listed in the documentation.

Nonce

The nonce is used to reduce replay attacks. In your request to the OCSP serve you include a nonce (string of data). You expect this in the response message.

The default in LDAP is off!

GSK_OCSP_NONCE_GENERATION_ENABLE=ON

GSK_OCSP_NONCE_CHECK_ENABLE=ON

GSK_OCSP_NONCE_SIZE=8

Configuration

In the configuration file I had

sslKeyRingFile START1/MQRING

sslCipherSpecs GSK_V3_CIPHER_SPECS_EXPANDED

Setting up the OCSP server on Linux

My CA was on Linux – address 10.1.0.2.

I set up a bash script to run the openssl OCSP server on Linx,

ca=”-CA ca256.pem”

index=”-index index.txt”

port=”-port 2000″

#rsigner=”-rsigner rsaca256.pem –rkey rsaca256.key.pem”

#rsigner=”-rsigner ecec.pem –rkey ecec.key.pem”

rsigner=”-rsigner ss.pem –rkey ss.key.pem”

nextUpdate=”-nmin 1″

openssl ocsp $index $ca $port $rsigner $nextUpdate

You need

- CA … for the Certificate Authority .pem file

- -index index.txt, for the status of the certificates issued by the CA

- -port … a port to use

- -rsigner… the public key to be used when responding

- -rkey … the private key for encrypting the response.

- -nmin … how often the index file is refreshed. Typically this value might be an hour or more.

It does not log any activity, so I had to use Wireshark to trace the network traffic.

Problems using a certificate signed by the CA, for encrypting the response.

The rsigner certificate needs to have

Extended Key Usage: critical, OCSP Signing

Without this I got the following in the gsktrace

ERROR check_ocsp_signer_extensions(): extended keyUsage does not allow OCSP Signing

A self signed certificate worked OK.

Testing it

I had several challenges when testing it

- ldapsearch on Linux sends up the “I support OCSP stapling”, but it objects to the response, and ends with unknown error code.

- openssl s_client does not send the OCSP flag, and so the certificate does not get validated.

- Java worked. I used this as a basis, and made a few changes to reflect my system. I needed to use the following optuons to run it

- -Djavax.net.ssl.keyStore=/home/colinpaice/ssl/ssl2/ecec.p12

- -Djavax.net.ssl.keyStorePassword=password

- -Djavax.net.ssl.keyStoreType=pkcs12

- -Djavax.net.ssl.trustStore=/home/colinpaice/ssl/ssl2/dantrust.p12

- -Djavax.net.ssl.trustStorePassword=password

- -Djavax.net.ssl.trustStoreType=pkcs12

Once I change GSK_SERVER_OCSP_STAPLING=ENDENTITY to GSK_SERVER_OCSP_STAPLING=OFF, I was able to use LDAPSEARCH.

Some OCSP certificates didn’t work

In my OCSP server, I used a certificate signed by the CA, for encrypting the response back to LDAP.

In the GSKtrace I got

ERROR find_ocsp_signer_in_certificate_chain(): Unable to locate signing certificate.

ERROR crypto_ec_token_public_key_verify(): ICSF service failure: CSFPPKV retCode = 0x4, rsnCode = 0x2af8

ERROR crypto_ec_token_public_key_verify(): Signature failed verification

ERROR crypto_verify_data_signature(): crypto_ec_verify_data_signature() failed: Error 0x03353004

There are two connected problems here

- Find_ocsp_signer_in_certificate_chain(): Unable to locate signing certificate.

- retcode 0x2af8 (11000) The digital signature verify ICSF callable service completed successfully but the supplied digital signature failed verification.8 (11000). I did not have the correct CA certificate for the OCSP certificate in the LDAP keyring.